If we are talking about that order of magnitude, I'd really do some preliminary calculations, e.g. The other question is: how much do weeks 2 - 8 of calculation time add to the quality of model and performance evaluation? during my Diplom (= Master's) thesis, the method I was to use was fixed. Of course there are exceptions to this, e.g. If I'd have no idea how long calculations will take (on a scale covering 2 orders of magnitude), I'd start asking myself whether I know enough about the method to sucessfully apply it. The other way round: this means that a single model needs to have a calculation time that is well in the range of what one can try out. That leaves me with a calculation that is embarassingly paralles and linear in both runtime and memory at the outermost level. I use resampling validation for my models, I typically calculate in the order of magnitude $10^3$ surrogate models during this. In addition, I also look at memory, because for my data that often limits the parallelization I can ask for. If I think it's going to take long, I do some test runs, which basically allows me to check like suggests. This is why people either manually oversee their training loss when training neural networks ( tensorflow-board), or use different heuristics to detect when the loss minimization starts to plateau out ( early stopping). It is usually not possible to use a reduced set of inputs to train the network and extrapolate from this reduced training time to the full dataset as the network will typically fail to perform well when trained with few data.

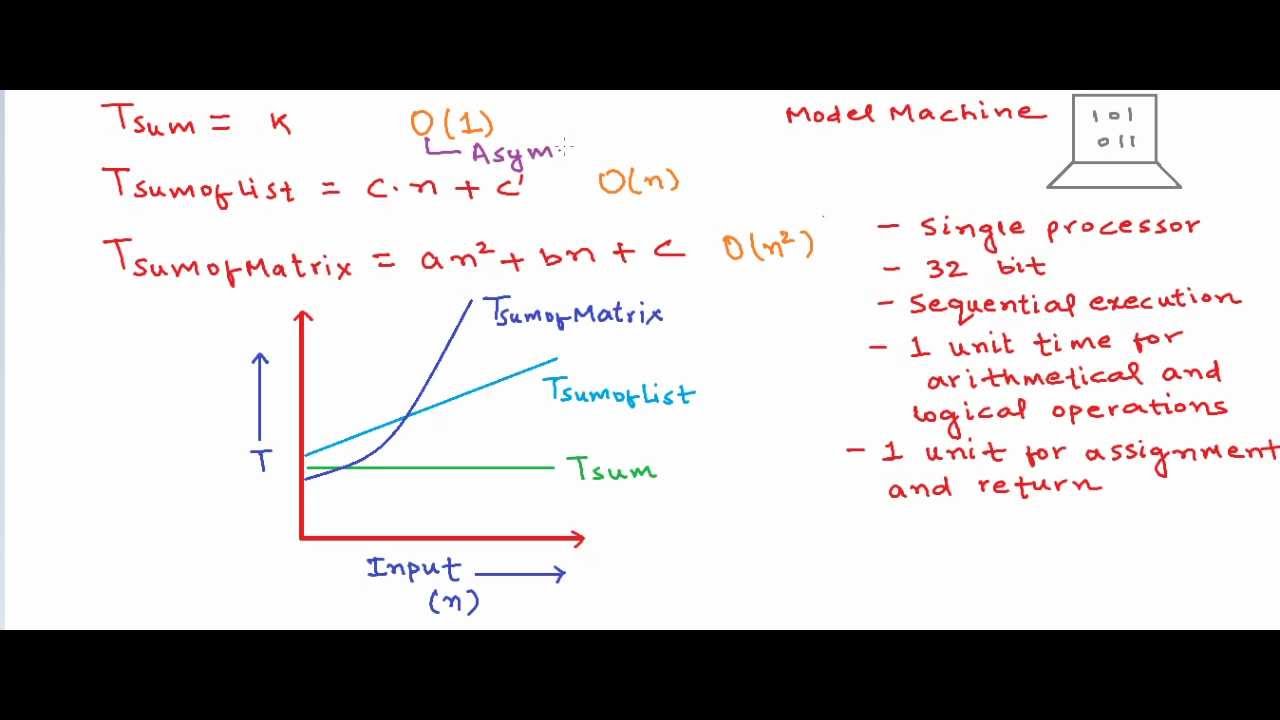

It is not even possible to extrapolate reliably the training duration after some training steps.Ĭ. I don't believe it is possible to tell in advance how long it will take to train a neural network, particularly a deep neural network (though I'm sure there is existing research trying to do this).ī. This depends completely on how complex is the function you are trying to approximate and how useful and noisy is the sample data you have.Ī. the number of gradient descent iterations you need to make to achieve a sufficiently small loss. The training time depends on how long it takes to approximate the relationship between your inputs and outputs sufficiently, i.e. However, the question asks about the total training time and not how much longer a forward pass will take if we increase the input. In the case of a neural networks it is the number of operations required for a forward and backward pass. Yes, we can quantify the complexity of an algorithm. But obviously, in practice it can make a difference whether $k=2$ or $k=1e6$.ĮDIT 1: Fixed a mistake in runtime based on comment from 2: Re-reading this reply after some years of working with neural networks, it seems quite educational, but unfortunately, utterly useless given the question. Alternatively, it is usually reported by the authors of the algorithm, when they publish it.įor example for SVM, the complexity bound is between $\mathit$. Usually, if you look at the wikipedia description of the algo, you can find the information if the bound is known. In either case, the complexity of an algorithm is reported using the big-O notation. The computer science guys live for these questions and they are very good at them. A simple way to time execution in Golang is to use the time.Now() and time.This question does not really depend on what type of an algorithm you run, it deals with computational complexity of algorithms and as such, it would be better suited for StackOverflow. Sometimes you just want to do some quick and dirty timing of a code segment.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed